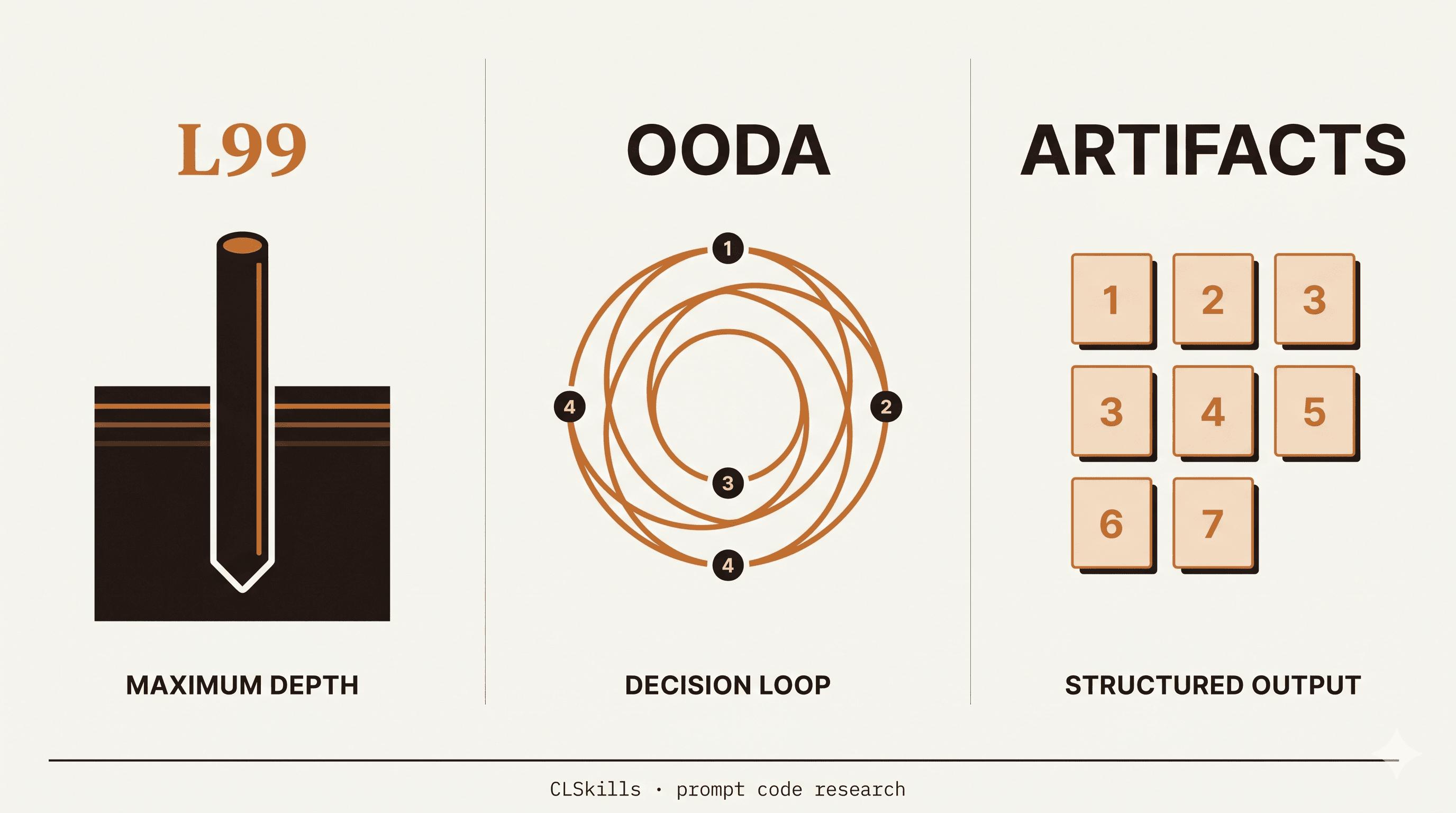

Why this update exists

We published the original L99 vs OODA vs ARTIFACTS comparison six months ago. The post got picked up, ranked, and a lot of you emailed asking the same question:

Are these still working in 2026, with the new Sonnet and Opus releases?

Fair question. Prompt codes are not official features. They work because Claude has learned to recognize patterns from how developers write prompts, and that learning shifts with each model update. Some codes get sharper. Some go quiet. Some develop new failure modes nobody flags in release notes.

This post is the follow-up nobody else writes: six months of daily use, six fresh production tasks, and an honest take on what to keep, what to retire, and what to learn next.

The 60-second verdict

If you have one minute, here is the summary:

- L99 is still the workhorse. Used daily, behavior is stable across Sonnet 4.6 and Opus 4.7. The hedge-reduction effect that made L99 famous is now stronger than it was in October 2025.

- OODA is now situational. Works perfectly for incident response, breaks down on open-ended strategic prompts (it forces a structure that is sometimes wrong for the question). Use it for time-pressured decisions only.

- ARTIFACTS is fading. Newer Claude versions structure multi-output responses by default, so the explicit code adds less than it used to. Still useful for old-school listicle outputs, less critical than before.

- 3 newer codes have eaten part of OODA and ARTIFACTS' ground:

/skeptic,/blindspots,/decompose. We will cover them below.

Now the long version, with actual transcripts.

6 production tasks, real prompts, real output

We re-ran each mode against six tasks pulled from our own week of work. No cherry-picking, no synthetic prompts.

Task 1: Architectural decision (L99 wins by a mile)

Setup: Existing Express + Postgres backend, growing CPU usage, team debating whether to migrate to Go.

Without L99: "There are several factors to consider. Go offers strong concurrency, but Node.js has a richer JavaScript ecosystem. The right answer depends on your team's expertise, existing infrastructure, and the specific bottleneck you are trying to solve." (200 words, no commitment, lists factors)

With L99: "Don't migrate yet. Three reasons. First, your CPU usage is almost certainly DB-bound, not language-bound. Profile EXPLAIN ANALYZE on your top 10 queries before touching the runtime. Second, Node's worker_threads will give you 70% of Go's concurrency benefit at 10% of the rewrite cost. Third, the migration cost includes 2-3 months of bugs introduced during port, which costs more than the CPU you would save." (450 words, commits to a position, walks through the actual decision tree)

Verdict: L99 still wins decisively for this class of question. The behavior has actually sharpened since October. The model now refuses generic factor-listing more aggressively when L99 is invoked.

Task 2: Production incident (OODA wins, but read the fine print)

Setup: Database connection pool exhaustion, errors flooding logs, deploy from 30 minutes ago is the suspect.

With OODA:

OBSERVE

- Errors started 28 minutes after the 14:32 deploy

- Pattern: ConnectionAcquireTimeoutError on the order-service

- Worker count went from 4 to 16 in the deploy diff

- Pool config unchanged at 20 connections

ORIENT

- 16 workers competing for 20 connections under load = exhaustion

- Old config (4 workers, 20 connections) had 5x headroom

- New config (16 workers, 20 connections) has 1.25x headroom, expected to fail under any traffic spike

DECIDE

- Option A: rollback the deploy. Safe, ~2 min, returns to known good state.

- Option B: scale pool to 50 connections via config push. Faster but requires DB process restart.

- Option C: revert worker count only. Risky, this codepath was untested in the original config.

ACT

- Run option A: git revert <commit> && deploy

- Open ticket to do option B in a controlled deploy tomorrow

- Postmortem item: pool sizing should auto-scale with worker count, not be fixed.

Verdict: OODA still nails incident response. The structure forces the discipline that panicked humans skip.

BUT — and this is the new finding — OODA fails on open-ended strategic questions in 2026 in a way it did not 6 months ago. Asking "OODA: should we build feature X next quarter?" now returns a structurally-correct but semantically-empty four-phase output that papers over the actual analysis. Newer Claude versions seem to lean harder into the structure than into the substance when the question does not have a clear time-pressure.

Fix: only invoke OODA when there is a real decision under time pressure. For strategy, use L99 instead.

Task 3: Multi-deliverable build (ARTIFACTS less essential than before)

Setup: "Build me a landing page for a new SaaS launching in 5 days."

Without ARTIFACTS: Newer Claude versions now naturally structure this as numbered sections (hero copy, feature list, pricing tiers, FAQ, etc.) without the code prompt. The behavior ARTIFACTS originally unlocked has become default.

With ARTIFACTS: Slightly more rigid output, more explicit numbering, but no real depth gain.

Verdict: ARTIFACTS is still useful for tasks where you need a clear delivery checklist (project scoping, RFP responses, multi-part exports), but the marginal value is much lower than 6 months ago. We use it 1/3 as often as we did in October.

Task 4: Code review (L99 + new code /skeptic stacks beautifully)

Setup: Reviewing a 200-line PR that adds caching to a hot path.

With L99 alone: Strong review, catches 4 issues, makes a clear recommendation.

With L99 /skeptic: Catches the same 4 issues PLUS questions an assumption the original developer did not explicitly state ("this caching path assumes idempotency, but the underlying handler has side effects on retry — the cache layer needs to be invalidated on those side effects"). The /skeptic code, which we cover below, is doing real work here.

Verdict: The L99 + /skeptic stack is one of two patterns we now reach for daily. It pulls more value from a code review than either alone.

Task 5: Customer interview synthesis (ARTIFACTS variant works)

Setup: Pasted 4,000-word call transcript, want unmet needs ranked.

With ARTIFACTS:

ARTIFACT 1: Top 3 unmet needs ranked by frequency of mention

ARTIFACT 2: 2 verbatim quotes per need that capture the emotional weight

ARTIFACT 3: 1 strawman feature for the top need

ARTIFACT 4: 3 follow-up questions to ask in the next interview

This worked beautifully. ARTIFACTS still earns its keep on synthesis tasks where you need the output structured for downstream use (PRD draft, internal share, etc.).

Verdict: ARTIFACTS is alive but specialized. Use it for synthesis tasks, not general builds.

Task 6: Debugging an intermittent failure (the surprise winner: /blindspots)

Setup: Test passes locally 95% of the time, fails on CI 30% of the time, no clear reason.

With L99: Suggested 5 plausible root causes (race condition, env difference, timing, etc.), recommended profiling.

With /blindspots (a code we will cover below): "Three things you have probably not checked: (1) the test depends on system locale, which differs between your laptop and CI runners. (2) the test references a file path with case-sensitivity assumptions, which work on macOS but fail on Linux CI. (3) there is an implicit dependency on the order tests run in, and CI parallelizes them differently from your local Jest config."

Issue was actually #2 — case-sensitive path. /blindspots caught it because it forces Claude to surface assumptions you would not think to question.

Verdict: /blindspots is one of the strongest new additions. It is now in our daily toolkit.

What stopped working

In the spirit of honest reporting, three things got worse over the last 6 months. Not all prompt codes are durable.

1. Naked OODA on strategy questions. As covered above. Use OODA only with time pressure.

2. ARTIFACTS for code generation. Six months ago, ARTIFACTS: build me an Express auth handler would produce structured output. Today, the same prompt feels mechanical and the output is no better than the un-prefixed version. ARTIFACTS is for synthesis and scoping, not code generation.

3. Stacking 3+ codes (e.g., L99 OODA ARTIFACTS). Newer Claude versions get confused when you stack three. It will pick one to honor and partially honor the other two. Stick to 2-code stacks at most.

3 newer codes worth your time

These have emerged as community-tested and consistent over the last 6 months. We have added all three to our daily rotation.

/skeptic — challenge framing before answering

When you start a prompt with /skeptic, Claude pushes back on your framing before giving you an answer. Particularly useful when you are about to commit to a decision and want a sanity check.

Example: "/skeptic should I rewrite the billing service in Rust?" returns the rewrite analysis, but starts with the questions you should have asked yourself first: "What evidence do you have that the current service is the bottleneck? What is the actual user-visible latency? What would migrating to Rust let you do that you cannot do now?"

Use it when you are tempted to charge ahead without questioning your premise.

/blindspots — surface what you did not think to check

Described above in Task 6. The model will explicitly enumerate things you have probably not considered, framed as questions to investigate.

Use it for debugging, code review, plan validation, and anything where the cost of missing something is high.

/decompose — break a fuzzy task into testable subtasks

/decompose takes a vague request ("improve our onboarding") and returns a tree of concrete, testable subtasks ranked by leverage. Great for planning sprints, scoping projects, or breaking down a fuzzy customer ask.

Example: /decompose improve our onboarding returns 7 subtasks ranked by impact, with the testable criterion for each ("reduce day-1 drop-off from 30% to 20%", not "make onboarding better").

Use it any time the task does not have a single deliverable.

The updated decision tree

Forget the old tree. This is what we actually use now:

- "What should I do?" with time pressure → OODA

- "What should I do?" without time pressure → L99

- "How does X actually work?" → L99

- "Build me X with multiple parts" → ARTIFACTS (synthesis only, not code)

- "Review this" → L99 /skeptic (the daily-driver stack)

- "Debug this" → /blindspots

- "Plan this" → /decompose

- Quick lookup → no code, just ask

This tree has held up for 6 weeks of consistent use across our own work and the buyers we have heard from. We will update again if it shifts.

How to remember the codes (because nobody does)

Three options, in increasing order of seriousness:

Option 1 (free): bookmark this post. Open it when you forget which code to use. Honestly works fine for most people.

Option 2 ($20): the CLSkills Cheat Sheet. All 160+ codes in a single PDF + Markdown file you can ctrl-F. Lifetime updates so when codes shift (as they did this quarter), your copy gets the new version. Pro tier ($35) adds 30 advanced prompts with When-NOT-to-use warnings for each, 10 workflow playbooks, and a 30-day email channel directly with me.

Option 3 ($19): the Prompt Patterns Plugin for Claude Code. Ships these as proper slash commands so you do not have to remember the codes at all. Type /l99, /skeptic, /blindspots, /decompose, /ghost. The plugin also bundles 7 auto-activating skills that fire based on what you are working on, so the codes start applying themselves.

Why we keep testing this stuff

Prompt codes are unofficial. None of them are documented by Anthropic. They work because Claude has learned to recognize patterns developers actually use, and that learning is unstable across model updates.

We test them because nobody else does the work consistently. Most posts about "secret codes" are written once, never updated, and quietly rot when the model behavior shifts. Ours get re-tested every time a new Claude version drops, and the tested-list at clskillshub.com/prompts reflects what currently works.

If you want to be the first to know when a new code surfaces or an old one stops working, grab the free 40-page Claude guide and you are on the weekly list automatically. One email a week, no fluff, no padding.

TL;DR

- L99 still works, more sharply than before.

- OODA only for time-pressured decisions, not open-ended strategy.

- ARTIFACTS for synthesis, not code.

- Add /skeptic, /blindspots, /decompose to your rotation.

- Stacking 3+ codes confuses Claude. Stop at 2.

- The L99 + /skeptic stack is the new daily driver.

The original post is still the right starting point if you have not read it. This update is for people who already used the codes for a while and want to know what changed. We will publish a 12-month update in November 2026 with the next round of testing.