Why this question matters now

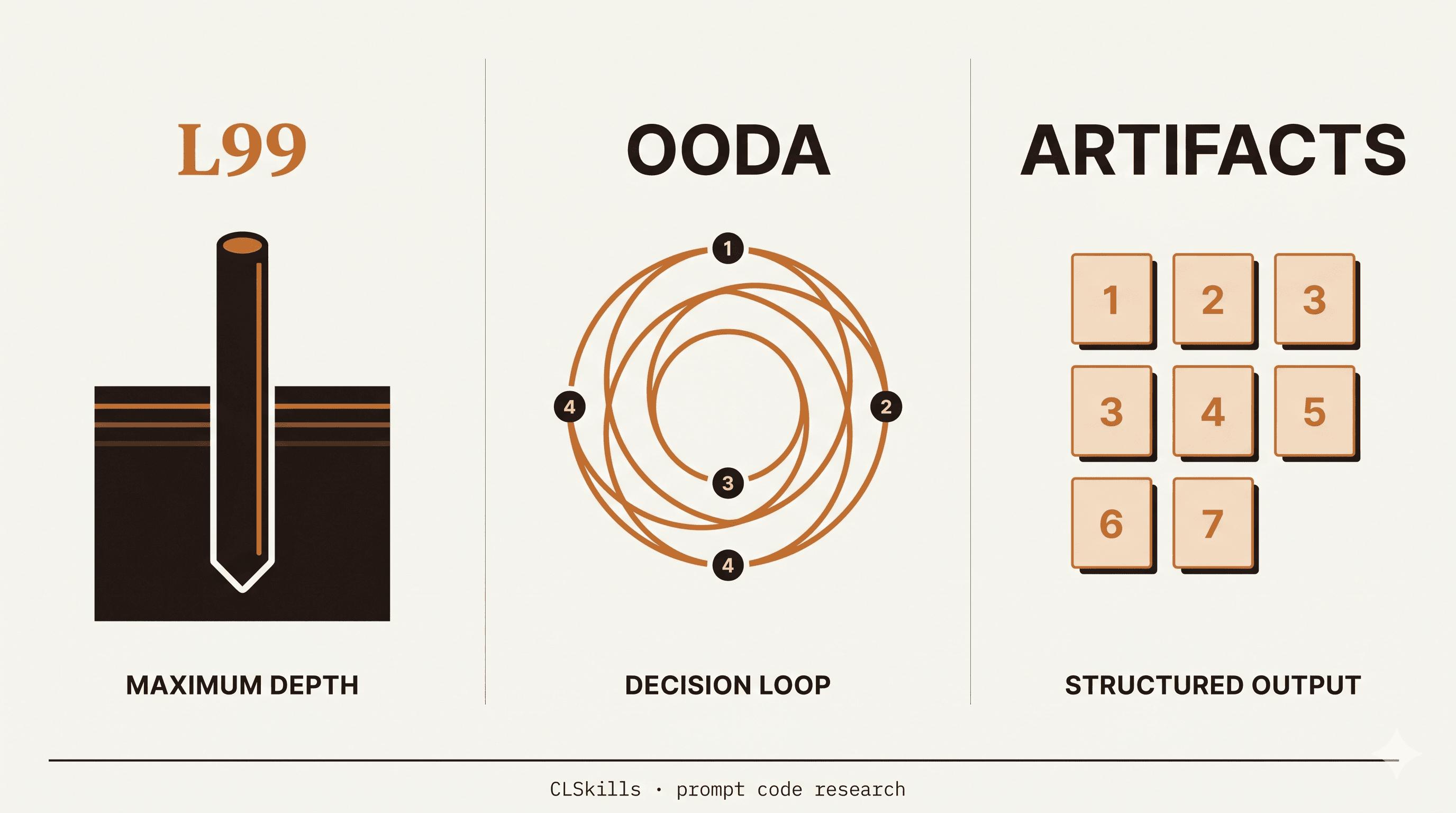

GPT-5.5 dropped this week and the inbox tells me what readers are actually asking: do my Claude prompt codes (L99, OODA, ARTIFACTS, /skeptic, /blindspots, /decompose) still work if I switch?

It's a fair question, and I'm not going to fake an answer.

This post is Part 1: the cross-model transfer framework, the mechanics of how these codes actually work, and per-code predictions with the reasoning behind each. Part 2 ships within 7 days of this post: the actual GPT-5.5 test data, run identically to the Claude tests we documented in the original L99 vs OODA vs ARTIFACTS post and the 6-month update.

If you want the test results delivered to your inbox the day they land, grab the free Claude guide and you're on the weekly list automatically.

How prompt codes actually work (the part most posts skip)

Before we predict anything, you have to understand WHY these codes work in the first place. Most posts about secret codes treat them as magic incantations. They aren't. They're behavior triggers that exploit specific properties of how LLMs were trained.

There are three distinct mechanisms by which a prompt code shifts model behavior:

Mechanism 1: Format-pattern recognition. Codes like ARTIFACTS work because the training data contains many examples of "ARTIFACT 1: ... ARTIFACT 2: ..." structured output. The model has learned to recognize that pattern and produce structured output when invoked.

Mechanism 2: Community-discovered convention. Codes like L99 work because developers have used them on social media, in blog posts, and in shared prompt libraries. The model's training data includes those usage patterns, so it has learned to associate L99 with the specific behavior community use has reinforced (in this case, decisive non-hedged answers).

Mechanism 3: Instruction-following on a structured prompt. Codes like OODA work partly because Claude has seen the OBSERVE/ORIENT/DECIDE/ACT framework discussed in military and management contexts, and partly because the framework is concrete enough that any reasonable model can follow it as a structured instruction.

These three mechanisms transfer differently across models. That's the core insight.

The cross-model transfer framework

If a prompt code relies on Mechanism 3 (instruction-following on a clear structured prompt), it will mostly transfer to any frontier model. OODA tells the model to break its answer into four labeled phases. Any model that can follow instructions can do that. Maybe the substance differs, but the structure transfers.

If a code relies on Mechanism 2 (community-discovered convention), transfer is uncertain. Community usage on Claude is a different distribution than community usage on GPT. The Claude-specific community has built up around L99, /skeptic, /blindspots over the last year through Twitter threads, Reddit posts, and Substack writeups. GPT users have their own conventions (XML-tagged prompts, Custom Instructions, JSON-mode tricks). The two communities overlap but they don't fully share their codes.

If a code relies on Mechanism 1 (format-pattern recognition) AND the format is generic enough to appear in any model's training corpus (numbered lists, headers, markdown structure), it transfers cleanly. ARTIFACTS is mostly Mechanism 1 with a generic format, so it should transfer well.

Boiled down: structure-based codes transfer, community-convention codes are uncertain, and format-recognition codes transfer when the format is generic.

Per-code predictions for GPT-5.5

With that framework, here are honest predictions for each of the six codes we documented in the 6-month Claude update.

L99 — likely to work partially

Mechanism: Mostly community convention (Mechanism 2), with a small Mechanism 3 component.

Why it works on Claude: L99 has been associated with "more depth, less hedging" through community usage Claude has been trained on.

Prediction for GPT-5.5: L99 will likely produce SOME response shift, but probably not the same hedge-reduction that makes it useful on Claude. GPT models have different default verbosity calibration. The community convention around L99 may or may not appear in GPT-5.5's training data; we won't know until we test.

My bet: 40-60% of the Claude effect transfers. L99 on GPT-5.5 will probably produce slightly longer responses than baseline, but the decisive-opinion behavior won't be as crisp.

OODA — likely to work fully

Mechanism: Mostly structured instruction (Mechanism 3). The OBSERVE/ORIENT/DECIDE/ACT framework is well-documented in military, business, and management literature.

Prediction for GPT-5.5: Should transfer cleanly. Any frontier model can follow a four-phase framework. We will probably see the same format-over-substance failure mode the 6-month Claude update flagged (the model honoring the structure but thinning the analysis), because that failure mode is rooted in RLHF training broadly, not in Claude specifically.

My bet: 80-90% of the effect transfers. OODA on GPT-5.5 will be useful for incident response, broken on open-ended strategic prompts.

ARTIFACTS — likely to work fully

Mechanism: Format-pattern recognition with a generic format (Mechanism 1).

Prediction for GPT-5.5: Should transfer well. The numbered-deliverables pattern is too generic and too well-represented in any model's training corpus to fail.

My bet: 80-100% of the effect transfers. ARTIFACTS on GPT-5.5 should produce structured numbered outputs the same way it does on Claude.

/skeptic — likely to fail

Mechanism: Heavy community convention (Mechanism 2). The "challenge framing before answering" behavior on Claude was reinforced by a specific community-driven usage pattern over the last 6 months.

Prediction for GPT-5.5: /skeptic almost certainly produces a generic "acknowledged, here's a challenge" response on GPT-5.5 rather than the framing-pushback behavior it produces on Claude. The community signal isn't there.

My bet: 20-40% of the effect transfers. /skeptic will probably need to be rewritten as a longer instruction ("before answering, identify two assumptions in my prompt that you'd push back on...") to get the same behavior on GPT-5.5.

/blindspots — likely to fail in code form, succeed if rewritten as an instruction

Mechanism: Community convention.

Prediction for GPT-5.5: /blindspots as a literal /blindspots token won't trigger the surface-what-you-haven't-considered behavior on GPT-5.5. But the underlying instruction ("enumerate the things I have probably not checked") works on any model when written explicitly.

My bet: 10-30% of the effect transfers in literal form. 90%+ if you rewrite as an explicit instruction.

/decompose — likely to work fully

Mechanism: Mostly Mechanism 3. Decomposing a fuzzy task into testable subtasks is a generic instruction-following capability.

Prediction for GPT-5.5: Should transfer cleanly. Any frontier model can break a fuzzy goal into a tree of testable subtasks; the literal /decompose token may or may not trigger the behavior, but the underlying capability is there.

My bet: 70-90% of the effect transfers. May need to spell out the instruction on the first invocation, then the model picks up the pattern in subsequent calls.

Summary table (predictions only, not test data)

| Code | Mechanism | Predicted GPT-5.5 transfer | Confidence |

|---|---|---|---|

| L99 | Community | 40-60% | Medium |

| OODA | Structured | 80-90% | High |

| ARTIFACTS | Format | 80-100% | High |

| /skeptic | Community | 20-40% in literal form | Medium |

| /blindspots | Community | 10-30% literal, 90%+ rewritten | Medium |

| /decompose | Structured | 70-90% | Medium-High |

What we're testing this week

The test methodology, identical to what we ran for Claude in the 6-month update post:

1. Six production tasks pulled from real working contexts: an architectural decision, a production incident, a multi-deliverable build, a code review, a customer interview synthesis, a debugging session. Same six tasks both for Claude (already documented) and for GPT-5.5.

2. Each task run three times per code per model (with fresh contexts to avoid cache effects), then blind-rated for: did the response shift in the expected direction, did the behavior match the documented Claude pattern, what was the substance quality independent of format.

3. Token-cost comparison because GPT-5.5 has different pricing and different token efficiency than Claude. A code that "works" but doubles your spend isn't a clean win.

4. Failure mode documentation because the interesting question isn't "does it work" but "how does it break differently."

Results ship in a follow-up post within 7 days. If you want it in your inbox the day it drops, the free guide subscription is the place: clskillshub.com/guide. One email a week, no fluff.

What you can do today

Three practical takeaways while you wait for the test data.

1. If you're using GPT-5.5 right now, rewrite community-convention codes as explicit instructions. Don't type /skeptic and hope it works. Type "before you answer, identify the two assumptions in my framing that are most likely wrong, and address those first." That's the underlying behavior /skeptic invokes on Claude. Spelled out, it works on any model.

2. Stick with structure-based codes regardless of model. OODA, ARTIFACTS, /decompose are all built on instruction-following capabilities that transfer. They might thin out in substance on GPT-5.5 (the format-over-substance failure mode), but they won't fail outright.

3. If you've been buying into the "prompt codes are magic" framing, this is your reminder they aren't. They exploit specific training-data properties. When the model changes, the codes shift. The Claude-tested codes were tested on Claude. They will need to be retested on every new frontier model. We'll do that work for you, but you should always know whether a code you're using has been tested on YOUR model or just inherited from someone else's tests.

Honest disclaimer

This post contains predictions, not test results. We are clearly labeling predictions as predictions. The actual GPT-5.5 results ship within 7 days as a follow-up post (we will link it from here when it's live).

We're publishing this prediction post now because the question of "will my Claude prompt codes work on GPT-5.5" is the question being asked this week, and giving you a structured way to think about transfer is more useful than waiting until we have all the data.

If the actual test results contradict any of the predictions above, we will own it. The 6-month L99/OODA/ARTIFACTS post already documented several places where original predictions were wrong (notably OODA narrowing on strategic questions, which we did not anticipate in October). That kind of correction is the whole reason this kind of testing matters.

What about Gemini 3?

Getting asked this too. Same framework applies. Once the GPT-5.5 part is shipped, we'll run the identical six-task battery on Gemini 3 Pro and ship that as a third post. The interesting question isn't which model is best in the abstract; it's which prompt codes transfer cleanly across all three, which fail on each, and what that tells you about what to write into the prompt itself versus what to delegate to a code.

If there's a model you specifically want covered (Llama 3.3, Mistral Large 2, the Chinese frontier models, any of the open-weights successors), reply to your guide subscription email with the model name and we'll factor it into the next test wave.